First posted April 2020

Introduction

I’ve been watching the President’s daily press briefings about the COVID-19 pandemic, and pretty much laughing at discussions of “the model.” The press asks almost totally ignorant questions, and frankly, the answers are almost equally ignorant. The people at the podium almost certainly don’t design or code pandemic models themselves, and they don’t seem to be very adept at explaining what they do know to the reporters.

Perhaps I can give my readers a bit of a perspective on the issue.

My own use of models

As a professional petroleum reservoir engineer, my job was to estimate how much oil and/or natural gas was underground in new or old fields and to predict if, how much, and how fast it could be “recovered” under different drilling and production plans and economic scenarios. Sometimes millions of dollars were ultimately at stake. During much of my career, I acted as a professional consultant. Sometimes my client was trying to attract investors, and sometimes I was representing investors trying to decide whether or not to join a venture. I even appeared in court hearings from time to time as an expert witness.

Whichever side of the table I was on, the core of my work was specifying, collecting and analyzing data, making calculations, and assigning risk factors; and then submitting my results and recommendations, usually in a written report.

One of the analysis tools at my disposal was mathematical modeling, otherwise known as computer modeling. Later in my career, there were some very sophisticated 3-dimensional models available, but models always have limitations, and the more complex they are, the more data and assumptions they require, and often the more likely they are to be wrong! I rarely referred to complex reservoir models except to dispute their results. On the other hand I, myself, wrote and frequently used a simpler 2-dimensional model that proved very accurate when used properly.

How models work

If you’re as old as I am, you no doubt recall that weather forecasters used to be the butt of jokes, because they were almost always wrong. Now they use computer models, and the forecast for “tomorrow” is almost always pretty close to right on the money. This is because the weather is subject to known physical laws; if you know, for a given point and many other points around it, the direction and magnitude of the barometric pressure gradient, the temperature and the humidity, and other factors like the ground topography and stored ground temperatures, and you have a good idea of how those conditions vary above you, then you can plug all that data into a proven model running on a fast computer, and you can be pretty confident of its predictions over a short period of time. Unfortunately, the accuracy of any model’s predictions declines exponentially as you “look” farther into the future. That is why the prediction for Saturday’s weather changes by the day, and even by the hour, as you watch from Sunday through Friday.

All models incorporate more than just data. They also require assumptions to be made, and they are subject to data errors and varying conditions at the data points and boundaries. Also, no two modelers will incorporate exactly the same data points, assumptions, boundary conditions, or even mathematical algorithms. Sometimes one particular model will come up with more accurate predictions than others, but other times a different model excels. Your local meteorologist will subscribe to as many models as he or she can, reject some he doesn’t trust, and either use the one he likes best or average the results from several.

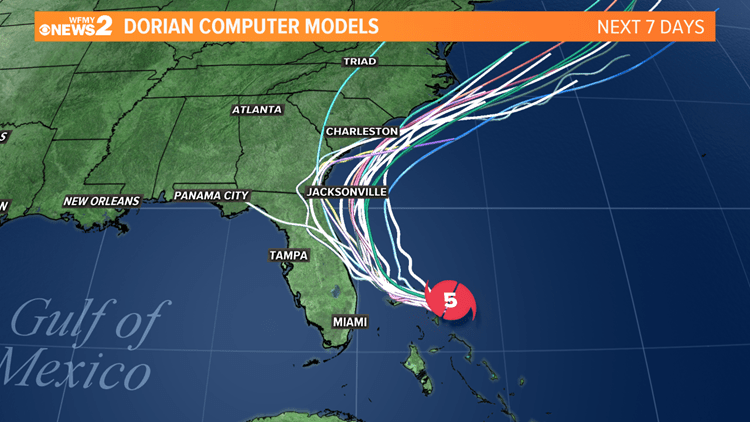

An example demonstrates how models vary from each other, and over time: last fall, Hurricane Dorian was threatening the eastern and gulf coasts of America. Storm model data is frequently presented in the form of “spaghetti charts”, showing the predictions of multiple models, as shown below. By comparing all the models, the familiar composite “cone of probability” map can be constructed. Early modeling indicated that the storm would most likely cross over Florida and hit the gulf coast. Later models showed, correctly, that it would instead turn north and threaten the Atlantic coast. Models are only an educated “best guess” about the future. The earlier models weren’t “wrong” as much as they were lacking in data.

Using model results

As an engineer, I normally had only a single model to work with. The data going into a model is factual, and can usually only be updated for new readings and corrections. Assumptions, though, are subjective and therefor imprecise. Proper use of a model requires plugging in a range of reasonable values for the parameters (assumptions) and constructing “best case”, “worst case”, and “most likely” conclusions.

Which of these conclusions I stress to a client depends on my own experience and instincts, and whether my client is a buyer or a seller.

Modeling COVID-19

One reporter at the President’s press conference yesterday asked why, given how good the death prediction seems now, does the number of total cases predicted seem to be so far off? He never really got an answer to that question. The correct answer is that the model is simply not that good for this disease. There are, and always will be, too many unknowns and assumptions for a model like this. If CDC understood the disease better, as they eventually will, they could improve on the results, but they will never be able to account for all the social distance cheating, accidental contacts, boundary violations, and unexpected cures and exacerbations. A model like this can show qualitatively what to expect, but quantitatively, the best that can be expected is a ballpark cone of possibilities.

Ideally, the government should release all of the results from all models. That is probably never going to happen, because governments realistically have to consider not only facts, but also security, morale, and even political fallout. That is perfectly legitimate when the actual truth is not even known with reasonable certainty. A President, if he’s not a complete political hack, has to strike a balance between urging caution and causing panic, while operating behind the scenes to achieve the best results, however the disease progresses. The CDC, by its nature, is always going to present a pessimistic worst case.